If you don’t give some love and attention to how you design your analysis cube and look at optimising processing then this is often fine at the very small cube size (under 2GB), but as you approach 10GB+ the first warning sign is usually horrendously long wait times for processing.

The analysis Services Performance Guide Section 4.0 (http://www.microsoft.com/download/en/details.aspx?displaylang=en&id=17303) can seem a little daunting, so I have summarised the three “quick wins”

The following tests use the 120 million row adventure works that you can get from this blog article (http://blogs.prodata.ie/post/Scaling-AdventureworksDW-Cube-for-BI-Testing-(2-of-2).aspx)

Note that there is a lot more “tuning” that you can do to improve processing performance, but these few tips are more the “basics” before you even look at tuning. I don’t want to see anyone ask me to look at RSS,their network card settings and jumbo frames when they are only using one out of 20 cores!

1. Partition Your Analysis Services Measure Groups to shorten processing time.

While partitioning can speed up queries in SSAS, it is more essential to allow the processing to happening in parallel, especially with the tendency for larger servers with 40, 64 or even 80 logical cores (pity that SSAS 2008R2 can only address 64 cores!). To prove this I ran two tests:

- The 120 million Internet Sales measure group with the default single partition

- The 120 million Internet Sales measure group partitioned by month

With only a single partition, both the data warehouse and the analysis services server are “idling”. we can see just a single core working hard . To make matters worse, most modern servers with power management or Turbo Boost CPU features and lots of cores will see this as as indication that the workload doesn’t really need any juice and will lower the clock speed appropriately (ouch).

Performance counters show us shifting about 260k rows per second and only using 2 threads (one for the reading and one for the writing)

Now we try that same processing with partitions by month and we get starkly different results. All the cores are humming along:

The performance counters are looking much healthier now with 758 k rows per second. Note we have 24 threads in use now. We can assume that 12 are being processes in parallel.

The improvement time wise is pretty good, from 15 minutes to 5 minutes.

2. Don’t use wide Fact tables

If you are only selecting 10 columns from the fact table and the table has 100 columns, then the number of rows packed per 8k page in the database engine is going to be very low and performance is going to really suffer as the data warehouse just can’t supply the rows fast enough.

If you need to bring in lots of additional data that is not needed for the cube, and processing time of measure groups is important, then consider three options:

a) creating a table with just the important attributes for the dimensional model and another “junk” table for attributes that are not needed by the multi-dimensional analysis services model.

b) Maybe you need a large sprawling data warehouse and your analysis services dimensional model should come form a smaller, leaner data mart. See http://thomasivarssonmalmo.wordpress.com/2012/02/18/the-data-warehouse-value-proposition/

c) Create a narrower version of the fact table for the cube to use, this could be a physical table (preferable) or an indexed view. I like the Indexed View if the situation is political data modelling wise – you can create a physical data mart in secret as ahem “tuning” and leave people to believe they have a single data warehouse.

Obviously some common sense is needed here. A “few” extra attributes aren’t going to hurt, but in row CLOB’s and very wide tables will not be good.

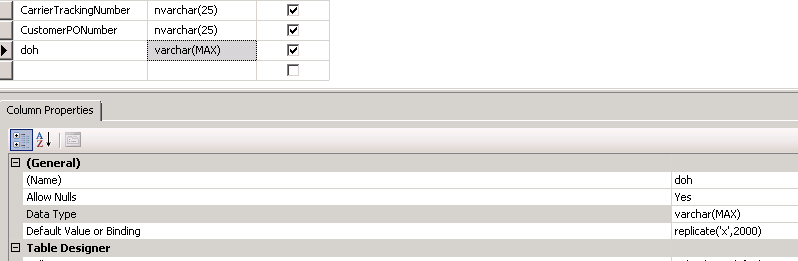

To prove this I added a column to the fact table which was 2,000 characters long and measured the increase in processing time from the baseline 15 minutes.

The table size grew from 17GB to a whopping 220 GB in size. More importantly our rows per second dropped when processing from 260k to about 1k (see perfmon screenshot below)

The chart below shows the difference in processing times for a single large partition. It went from 15 minutes to about 55 Hours when we added a lot of unwanted or wide columns to the fact table. Obviously the workload shifted from memory bound to storage and memory bound.

3. Watch your Threads

Analysis Services can default to only allow 64 threads for storage operations such as processing. On your shining new 40 core server this may not be enough and you will see much queuing on the “processing pool job queue length”.

During processing watch to see if you fail to achieve > 80% of CPU and the Idle threads comes down to zero. This is a sign that you need to increase the Threadpool\Process\MaxThreads setting.

we can see from the high Idle threads and lack of queuing, that we haven’t hit the the limit yet (see below). We are not too bothered as the CPU is avg 94%, so more threads would just create context switching.

If you want to process more than TEN partitions in parallel you will need to also change the maximum database connections allowed by analysis services (see below)

4. Things that may not help as much as you think they would – Table Partitioning

On data warehouses with under say 30Gb size fact tables, the need to partition the analysis services cube comes much sooner than the need to partition the data warehouse. Sure its a best practice to partition them both, but bear in mind the partitioning of the data warehouse will often not translate into “massive” performance gains for Analysis Services Processing.

SQL Enterprise for example supports “merry go round” table scans where multiple queries can use the same table scan results.(http://sqlserverpedia.com/blog/sql-server-bloggers/merry-go-round-scans/), which mitigates this scenario a lot.

In our sample 120 million row AdventureWorksDW, partitioning of the sample data warehouse led to about a 5% improvement in throughput. Not bad, but not as good as the 300% improvement with partitioning the Analysis Services Measure group !